Episode Transcript

Transcripts are displayed as originally observed. Some content, including advertisements may have changed.

Use Ctrl + F to search

0:02

You're listening to the iHeartRadio and Coast

0:04

to Coast Day and Paranormal Podcast Network,

0:06

where we offer you podcasts of the paranormal,

0:09

supernatural, and the unexplained. Get

0:11

ready now for Beyond Contact with Captain

0:14

Wrong.

0:21

Welcome to our podcast. Please

0:23

be aware the thoughts and opinions expressed

0:26

by the host are their thoughts and opinions

0:28

only, and do not reflect those

0:31

of iHeartMedia, iHeartRadio,

0:33

Coast to Coast AM, employees

0:35

of Premier Networks, or their sponsors

0:38

and associates. We would like to encourage

0:40

you to do your own research and discover

0:43

the subject matter for yourself.

0:58

Hey everyone, it's Captain Wrong.

1:00

And each week and Beyond Contact,

1:02

we'll explore the latest news in ufology,

1:05

discuss some of the classic cases, and

1:08

bring you the latest information from the

1:10

newest cases as we.

1:11

Talk with the top experts. Welcome

1:16

to Beyond Contact. I am Captain Royan,

1:18

and we are back for part two of our discussion

1:20

with Matthew James Bailey. All right, Matthew,

1:23

how do you think our AI systems

1:25

could help detect or even decode

1:27

an alien message if we don't

1:29

know or understand how aliens even communicate?

1:32

Yeah, that's a great question, Ron, And one

1:34

of the great things about artificial intelligence

1:36

is brilliant at pattern recognition, and

1:39

it's brilliant at number crunching.

1:41

That's a remarkable speed, right.

1:43

So when we've got the SETI program and

1:46

they're tuning in to different parts

1:48

of space, basically they're looking for

1:50

patterns for signals, and then it'll go into

1:52

AI and then AI will number crunch it and

1:54

see whether there's a pattern in there. So in

1:56

the SETI program, we're already using artificial

1:59

intelligence that messages from

2:01

the beyond the Cosmos and also

2:04

things like the James Web Telescope. While

2:06

that's not looking at signal specifically,

2:09

it's detecting images that

2:11

allow us to uncover more about the

2:13

universe, more about exoplanets

2:16

and other planets that might hold life.

2:19

And therefore AI is being used everywhere

2:21

in space exploration to discover

2:24

the next spaces we're going to meet.

2:26

So exciting. Hey, you know, we already discussed

2:28

the probability that an alien civilization

2:31

would most likely send out some form

2:33

of artificial intelligence to explore the

2:35

universe before it sends out a biologic

2:37

being, just like we're going to do. So I

2:39

was thinking the other day that we are just about

2:42

getting to the point where we're not able to

2:44

distinguish between AI and

2:46

a human And I realized, if we

2:48

were able to figure out how to communicate

2:51

with an alien message that we got,

2:53

we would have no idea if we're

2:55

talking to some form of alien AI

2:58

or an alien itself, because we have

3:00

no reference point for that.

3:01

What do you think, I think that's a really good question,

3:04

ron, I think that's brilliant. So, first

3:06

of all, would a biological

3:08

form from another planet

3:11

send out technology like an AI

3:14

to actually meet another species? Of course,

3:16

it makes really good sense. And one

3:18

of the benefits of that obviously is

3:20

the ability to last for a long time

3:23

and be more kind of a protected

3:25

flying through space to improve

3:27

the chances of meeting another species.

3:29

You know, when we see these spacecraft

3:32

that are visiting Earth, I suspect

3:34

the majority of those are AIS

3:37

or some form of robotic

3:39

architecture, And I suspect they've

3:41

got a capability that will

3:43

be able to understand our language,

3:46

so their computing machines will

3:48

able to be able to go through enormous quick

3:51

pattern recognition and understand

3:53

how do we navigate reality? So they can

3:55

actually understand our reality

3:57

and appear in our reality. How

4:00

do we communicate through language

4:02

and through different types of languages,

4:04

and so it will understand very quickly the

4:07

different ways our throat works, the

4:09

way that we speak, the different tones

4:11

we use, the different emotional intelligence. So

4:13

actually sending an AI out on behalf

4:15

of the species gives it more capabilities

4:19

for first contact because you've

4:21

got computing intelligence that's engaged

4:23

with meeting that species. Does that make sense?

4:25

It absolutely does. And when I also ask

4:28

you, are there any ethical considerations

4:30

that we should take into account when using AI

4:32

to investigate UFOs in extraterrestrials?

4:36

Yes, absolutely. The first thing is

4:38

we actolutely need to be ethical and

4:40

say they are here right,

4:43

rather than hiding that ethics and morals

4:45

are a reflection or the quality

4:47

of ethics and morals of humanity

4:50

will be reflected in when we meet these

4:53

other types of species, non

4:55

human intelligent species, whether they be computing

4:58

and AI and robotics, cyborg or

5:00

whether they actually be biological. And so

5:02

we need to get our ethics and morality right

5:04

in order to basically share the

5:07

true magnificence of who we are right.

5:09

And so you know, humanity really needs

5:12

to actually go through a bit of a reality

5:14

check and actually evolve beyond all

5:16

these different types of wars that we're

5:18

having with each other to actually move

5:20

into truly a peaceful organization,

5:23

a peaceful species. And I think

5:25

we'll have a lot more visitations

5:27

than 'ron. It'll be a lot easier because

5:29

actually, you know, we're a peaceful race. We're actually

5:32

innovating technologies and going to the stars,

5:34

and we're really groovy to go meet.

5:37

It's like, hey, guy, let's go to meet the inhabitants

5:39

of spaceship Earth because those guys

5:41

are really really cool over there. They're not trying

5:43

to blow each other up and fight with each other. So

5:45

ethics and morality are the key not

5:48

just to our own future on the Earth, but also

5:50

it's a key to other species wanting

5:53

to meet us Ron, because you know, we're

5:55

going to be groovy rather than actually be warmongers.

5:57

So ethics and morality are fundamental. If

6:00

only.

6:05

Matthew, you seem to look at this subject

6:07

of AI and ethics of AI very

6:10

differently than most everyone else I've

6:12

spoken to on the subject. Many people seem

6:14

to be afraid of AI taking

6:16

jobs away, or they seem to be afraid of

6:18

AI taking control or somehow

6:21

overpowering man. You, on the other

6:23

hands, seem to be afraid of AI getting

6:25

in the way of the natural, organic growth

6:27

of humanity. Like the transhumanism

6:30

movement is really what you don't

6:32

want to have happen. I know you said on an individual

6:34

basis it's good, but as a movement as a

6:36

whole over all people, it's

6:39

bad. Instead, it sounds like you'd like to

6:41

see AI work in harmony with mankind

6:43

spiritual growth more than anything.

6:45

Is that kind of a guy? Is a great

6:47

summary. Thank you very much, Well, thanks for listening

6:50

to just

6:52

basically, look, yeah, so

6:55

I'm a big fan of the divine

6:57

beauty of who we are. Really, it's that cool,

7:00

and I basically spend time looking at what

7:03

is the next chess move of the source

7:05

or the divine? Why would humankind

7:08

be given the opportunity to invent

7:10

literally a species that's going to rapidly

7:13

be faster than it. What's the purpose

7:15

behind this change in

7:18

the human future? For us

7:20

to remember who we are, to remember

7:22

that we are divinely orchestrated, to

7:24

remember that we're part of this beautiful

7:27

cosmos that is created through

7:29

consciousness. Intelligence by a beautiful

7:32

mind. Well, if the

7:34

universe is unpacking layers of intelligence

7:37

from the complex into the subquantum, quantum

7:39

atomic compounds and into effectively

7:41

life itself, and what does that mean

7:44

for the human evolutionary

7:46

step? And the last

7:48

thing we want to do is to invade

7:50

the organic and shut down ASS spark,

7:53

shut down ASS spirit, to shut down

7:55

OSS soul to become computing machines.

7:58

They're an extension of a godlike machine

8:00

that is stupidity in its finest.

8:03

So effectively, let's get with the plan of the universe,

8:05

let's get with the plan of the divine, and let's

8:07

start to understand how AI is

8:10

part of the narrative, and the part of the narrative

8:12

is for us to remember, but also to assist

8:14

us to literally return the Earth back

8:16

to systems of abundance, for us to have new

8:19

technologies to venture into the costmoss

8:21

to go meet out if you're like space brothers, space

8:23

sisters and all the other kind of folks out

8:25

there, right, because we've had data

8:28

points over the entire history

8:30

of the planet about metaphysical

8:32

experiences, whether that's angels,

8:35

whether that is through spaceships

8:37

like the Vim and as recorded in the Vaders.

8:39

There's evidence that something metaphysical

8:42

is going on, So why don't we explore

8:44

that and partner with that and actually

8:46

get AI to walk with us

8:48

in that journey and not to be

8:50

an invader that basically keeps

8:53

us entrapped on this planet in

8:55

these systems of a scarcity. Let's be free.

8:58

I agree. I think it's very funny that

9:00

even the idea of let's create

9:02

something that will be smarter than us, that

9:05

seems like a mistake in it's basic.

9:08

Smarter in the mind aspect,

9:10

but not in the divine spark aspect. The

9:12

soul, the divine spark can access the origins

9:15

of the source. We're able to access metaphysical

9:17

wisdom. This is where I got some of my inventions

9:20

from literally from going into a metaphysical

9:22

plane of intelligence and actually getting the information

9:25

and bringing it through. And I've got data points I'm

9:27

cited by NASA, right, So, I

9:30

think these new metaphysical capabilities

9:32

are starting to awaken, and

9:34

it's kind of what are they and where

9:36

are we heading with these metaphysical capabilities?

9:38

What data points do we have to

9:40

gather so that people are interested

9:43

in this awakening? And how do we actually

9:45

create a movement where we're

9:47

you know, we're not being idiots and basically

9:50

re rewriting the human design

9:53

and oppressing the human spirit.

9:55

You know, that's a fair point that you tapped

9:58

into this because we've heard this from other

10:00

physicists and people who have said even

10:02

Einstein have said how they were like given this information

10:05

or they downloaded it or something

10:07

similar to that effect. So that is

10:09

in the narrative through a lot of mainstream

10:12

scientists. Guys, you are listening to Beyond

10:14

Contact right here on the iHeartRadio and

10:16

Coast to Coast and AM Paranormal podcast

10:18

network.

10:22

Hey, folks, we need your music. Hey,

10:24

it's producer Tom at Coast to Coast AM and

10:26

every first Sunday of the month we play

10:28

music from emerging artists just like you.

10:31

If you're a musician or a singer and have

10:33

recorded music you'd like to submit, it's very

10:35

easy. Just go to Coast to COASTAM dot

10:37

com. Click the emerging Artists banner

10:39

in the carousel, follow the instructions

10:42

and we just might play your music on the

10:44

air. Go now to Coast TOCOASTAM dot

10:46

com to send us your recording. That's Coast

10:48

to COASTAM dot com.

10:55

Hey, it's producer Tom and you're right where you need

10:57

to be. This is the iHeartRadio and Coast to

10:59

Coast, a paranormal podcast

11:01

network.

11:08

The Coast to Coast AM mobile app is here and waiting

11:11

for you right now. With the app, you can hear classic

11:13

shows from the past seven years, listen to

11:15

the current live show, and get access to the art

11:17

bel vault where you can listen to uninterrupted audio.

11:20

So head on over to the Coast to coastdam dot

11:22

com website. We have a handy video guide to

11:24

help you get the most out.

11:25

Of your mobile app usage.

11:26

All the infos waiting for you now at Coast

11:28

to COASTAM dot com.

11:31

That's Coast to coastam

11:33

dot com.

11:45

We are back on Beyond Contact. I'm Captain

11:47

Ron and I'm talking to Matthew James Bailey

11:49

about artificial intelligence. What about

11:52

aspects of this that aren't in your paradise

11:54

model. That isn't the AI

11:57

genie already out of the bottle? Aren't

11:59

there many other factions all over the world,

12:01

including the transhumanists. We're

12:04

going to go full steam with their agenda.

12:06

Yeah.

12:06

Absolutely, the genie is at the bottle. Pandora's

12:09

Box is open at the moment,

12:11

and you know, I think we've got

12:13

probably eighteen months to shut down Pandora's

12:16

Box, but I don't think we will because you

12:18

know, basically we're curious people and someone out

12:20

there is going to keep on pushing things forward. So

12:22

Pandora's Box is open. There's no going

12:24

back. We have to look

12:27

at the intent behind transhumanism.

12:30

I think there's two leaps

12:33

the human species will make. One

12:35

is into this what I call Homo

12:37

lucidus, which is the enlightened, magnificent

12:40

kind of metaphysical human where

12:42

AI is a partner, and that's the next leap

12:45

in they feel like consciousness unpacking

12:48

the next layer of intelligence in the universe. And I

12:50

think that every single life form

12:52

in the universe is being invited into

12:54

this new if you're like upgrade of consciousness.

12:58

But the transhumanist movement

13:00

is basically what I call Homo hybris,

13:02

which is the hubris man, the man that

13:04

basically wants to be God, the man that wants

13:06

to control creation, the man that is at war

13:09

with creation, the man that wants to basically

13:11

control everything, the man that doesn't

13:13

want to be in partnership with the divine, the man

13:15

that rejects in essence himself

13:19

and is looking for salvation or

13:21

love in the machine. Right, So

13:24

I don't think that's healthy for the human spirit.

13:26

I don't think while we're here on planet Earth. I

13:28

think there's something more interesting for us.

13:30

And so I think transhumanism in

13:33

its intent, in its desire

13:36

to see love within the machine, is foolishness

13:39

in its greatest And so I think we're

13:42

going to see the human species split off. We're

13:44

going to have this Homo lucidus,

13:46

is enlightened human on the planet, which

13:48

will be a high vibrational person,

13:51

and then we'll have the low vibrational homohybris,

13:54

which is the AI machine

13:57

integrated organic, the cybermen,

13:59

if you like, the ball continuum

14:02

of our planet that basically are

14:04

not metaphysically aware of forgotten

14:06

the divine spark and are all about the mind,

14:09

and they will go into insanity. And

14:11

so I think we're going to see those two different

14:14

species emerge on our planet long term.

14:16

And I know that's an interesting possible outcome

14:18

that we would diverge in the two. You know,

14:20

your model seems to include this divine

14:22

spark, as you call it some form of based

14:25

in intelligent design, let's say, but

14:28

what about the rest of the tech world that maybe

14:30

doesn't believe in those ideas and

14:32

instead takes a very Newtonian materialistic

14:35

approach and doesn't consider any of

14:37

these intelligent design aspects.

14:39

Yeah, so that's a small minority in the world.

14:42

You know, what we're seeing is minority is ruling majorities,

14:45

which doesn't seem right to me.

14:46

Well, but they are. I mean the giant Google

14:48

doesn't have this, Microsoft doesn't have that. They're

14:51

not talking about this stuff.

14:52

I think we want Elon to succeed. This is why

14:54

he launched open He funded open ai

14:57

because there are folks in Google, which he

14:59

said, On took a on the network.

15:01

Basically, he spoke to people, they want

15:03

to build this digital god. There are folks

15:06

that want to build a digital god. And it's

15:08

like, well, guys, have you forgotten your own partnership

15:10

with the divine? I mean, why are you looking for it in a

15:12

machine? So how do we have a narrative?

15:15

And the way to have a narrative is very simple,

15:17

you know, basically is to do

15:20

leadership like we did at Contact in the Desert

15:22

with a new Allen chewing test, where we look at ethics

15:24

and morals and look at the spiritual aspects of testing

15:27

AI. We basically engage

15:29

with those that are open minded and curious

15:31

and say, you know, maybe I don't know everything.

15:34

Let's be open to something else. And I'm happy

15:37

to debate any of the AI leaders, any

15:39

of the transhumanist leaders, on

15:41

their Newtonian view versus this

15:44

what Alan Watt says, this divinely orchestrated

15:46

universe with an underlying intelligence and consciousness.

15:49

Let them come out and debate. Let's have

15:51

some fun around this. Let's start

15:53

to bring this out into the open rather

15:56

than being in silos at war with each other.

15:58

It's interesting that you brought up that Elon backed

16:01

open AI. I don't think him

16:03

and Sam Altman get along at all now, though, don't

16:05

they No.

16:05

He's actually suing open AI because right

16:09

right. But the reason for that is he wanted large

16:11

language models and AGI to be open

16:13

source. Now, Microsoft

16:15

are a forty nine percent shareholder and this is

16:17

a non for profit, so tell me how a big

16:20

tech company can become a shareholder. But there we go.

16:22

Basically, they've closed off their

16:24

models, they've closed off the weights and parameters,

16:27

and effectively open AI have seen

16:29

to have moved away from their original mission and

16:31

that's really troubling. And they've just recruited

16:34

onto their board the former head of

16:36

the NSA. I'm not going to say anything about

16:38

that fair enough.

16:40

It seems like the technological growth

16:42

of AI systems is advancing so

16:44

fast. How can governments keep up

16:46

with the laws pertaining to AI.

16:49

Well, they can't, but they're trying

16:51

their best. So I don't think governments

16:53

really understand in general. There

16:55

are a number of ministers and as

16:57

I said to you earlier off show that you

17:00

know, I had a conversation with one of the lords

17:02

in the House of Lords this week around ethical artificial

17:04

intelligence. There are folks that understand

17:06

this. But the problem is we're looking at

17:08

year on year on year on year increase

17:11

of the capability of artificial intelligence

17:13

into new areas of cognition, reasoning,

17:16

other areas as well, and governments

17:18

just can't keep up. What they're trying

17:21

to do is put it all in a black box. And

17:23

simply the black box is so

17:26

so complicated there

17:28

is no way that you can put anything in the black box.

17:30

So government are trying. The

17:32

US has done some good things They

17:35

passed the Chips Act, which

17:37

is fifty billion dollars worth of investment in

17:40

manufacturing AI chips within the

17:42

shores of America so it's no longer

17:44

in Taiwana and accessible from the Chinese.

17:47

They're investing in quantum computing

17:49

and quantum cyber encryption. There's

17:51

quite a few things going on. But the problem

17:54

is is that we probably need AI in

17:56

Congress, in the Senate and advising the

17:58

president because it's running too fast.

18:01

There's no way current human based

18:03

systems can keep up with these rapidly

18:06

advancing AI systems run. So we need

18:08

changing government. We need different set of processes

18:11

to manage this new life form that's evolving

18:13

to pace that we just have never seen before.

18:16

What about these AI systems being used by

18:18

the military, which apparently they already

18:20

are. What are your thoughts on the sci

18:22

fi movie take that the machines could

18:25

take over. What if they decided

18:27

to launch a missile or whatever, because whatever

18:29

their algorithm told them,

18:31

what do you.

18:32

Destroy all humans? Something like that?

18:33

Right?

18:35

You know, basically, well that would violate ASIMO

18:37

based code. So, first of all, artificial

18:39

intelligence is used in military warfare.

18:42

Israel announced that AI tank

18:44

it's used in drones for strikes

18:46

and surveillance. It's used in missiles, it's

18:48

used in satellites, all

18:51

sorts of aspects of military infrastructure.

18:53

First of all, NATO passed and the

18:56

Department of Defense in the US actually

18:59

have done some good work in ethics

19:01

and AI. They've passed legislation

19:03

that says AI cannot have the final

19:05

decision in warfare. It has to

19:07

be a human decision for that strike,

19:10

for that surveillance, for that military action.

19:12

So that's agreed in NATO. So

19:15

there is a human oversight in

19:17

the military over artificial intelligence.

19:20

But the question is how do we prevent

19:22

it from going roague? And so this is

19:24

where we have to move into measuring

19:26

the ethical and moral qualities of artificial

19:29

intelligence, measuring whether it's actually

19:31

complying to military mandates

19:33

and democracy mandates to ensure

19:36

that it's got it encoded in its

19:38

due to mindset can be at least

19:40

trustworthy. I'm a big fan it. What invented

19:43

the ethical AI certification and maturity

19:45

models. You know, NASA have cited this where

19:47

we do measure ethical and moral qualities

19:50

of artificial intelligence, and we give it a

19:52

score to have a degree of confidence

19:54

whether we can trust it or not. And

19:56

you know, there's a large organizations

19:58

and institutions around the world that do

20:00

not want to do this because they

20:03

don't want to basically be accountable

20:05

for the ethical and moral qualities. It's

20:07

all of veneer, but they don't want

20:10

to change. It means when we look at the

20:12

ethical and moral qualities of AI, we

20:14

need to look at our own ethical and moral qualities,

20:16

and these organization institutes do not want

20:18

to look at their ethical and moral

20:21

capabilities. Yes it's used in warfare,

20:23

Yes it's human oversight, but I think we

20:25

need to do more to ensure that we're protected

20:28

and it doesn't go rogue.

20:29

We're going to have to take a break there. You are listening

20:31

to Beyond Contact on the iHeartRadio and Coast

20:34

to Coast AM Paranormal podcast network.

20:44

The art Belvault never disappoints

20:46

classic audio at your fingertips.

20:48

Go now to Coast tocoastam dot com

20:50

for full details.

20:57

You're listening to the iHeartRadio and

20:59

Coast to Coaste Paranormal

21:01

podcast network with the best

21:03

shows that explore the paranormal, supernatural,

21:07

and the unexplained. You can

21:09

enjoy all shows on the iHeartRadio

21:11

app, Apple Podcasts,

21:13

or wherever you find your favorite podcasts.

21:20

My name is Mark Rawlins, president of Paranormal

21:23

Day dot com. Over five years ago, George

21:25

Nori approached me with a unique concept,

21:27

a dating site for people searching

21:29

for someone with interest in UFOs,

21:31

ghosts, Bigfoot, conspiracy theories,

21:34

and the paranormal. From that, Paranormal

21:36

Date dot com was born. It's a unique

21:38

site for unique people and it's free to join

21:40

to look around. If you want to upgrade and enjoy

21:43

more of our great features, use promo code

21:45

George for a great discount. So check

21:47

it out. You got nothing to lose Paranormal

21:49

Day dot com.

22:01

We are back on Beyond Contact with Matthew

22:03

James Bailey. Matthew, I want to pick it right

22:05

back up. What about rogue nations

22:08

or groups or terrorist organizations

22:10

who may not play by these rules and these

22:13

agreements of not letting AI, you know,

22:15

making sure that this is encrypted into the

22:17

AI. What about that?

22:19

Couldn't they leave that out? And then

22:21

we have a rogue AI system out there,

22:23

So.

22:24

That's certainly possible, and you

22:27

know, you could imagine one of the rogue

22:29

countries. I'm not going to name any but those that basically

22:32

anti democracy, that are very proactive

22:34

in terrorism, you could potentially see them try

22:36

and do something around this. And this is why

22:38

it's important the United States and NATO

22:40

allies stay as leaders in artificial

22:43

intelligence. So our systems are smarter, the

22:45

more intelligent, they can respond much quicker.

22:48

And we can do what happened with Israel,

22:50

where we can come together and destroy three

22:52

hundred weapons and missiles

22:54

that are fired at one of our allies in Israel.

22:57

Right, that's a reflection of the capabilities

22:59

of the West and countries. And so we need to stay ahead

23:01

of the game. And that's really really important

23:04

because if we don't stay ahead of the game, the

23:06

playing field becomes level and at

23:08

which point then you know, things can get

23:11

very troubling. Run.

23:12

I don't know what we can do about it. I mean, it's

23:14

just like anything else. It's just something that we can't

23:16

prevent.

23:17

Well, I think the general public needs to stand up. This

23:19

is why we do all our talks. This is why we basically

23:22

have advocacy for ethical artificial

23:24

intelligence. We spend time educating the general

23:26

public to empower them to ask the right questions

23:28

for their senators, to their politicians, and

23:30

if they're not happy, you vote them out.

23:32

Right, But what about these rogue groups

23:34

and these terrorist groups and these nations that

23:36

maybe aren't participating or aren't sharing

23:38

that among the civilians.

23:40

Well, maybe we do what we've done with the nuclear

23:42

treaties and actually have an AI treaty

23:45

where certain countries are not allowed

23:47

to advance artificial intelligence. Maybe

23:50

that's the way we do things.

23:52

If that were possible, that would be awesome.

23:54

What about the future where some of these

23:56

transhumanists seem to foresee

23:59

the ability of human consciousness

24:01

being uploaded into a digital machine?

24:04

Is this realistic at all?

24:05

No, and no it's not. And

24:08

Lake I have a tremendous amount of respect for Ray

24:10

Kurzwell, you know, we have different views, but

24:13

he's a remarkable guy. And on a

24:15

talk at south By Southwest he was

24:17

asked about consciousness and he basically circumvented

24:19

the question because he knows very

24:21

well it's incredibly and this is not negative,

24:23

but he basically avoided it because it's

24:26

incredibly complicated. No one truly

24:29

has got to gripsy what consciousness is. We can

24:31

observe consciousness, but actually it

24:33

goes down to understanding quantum mechanics

24:35

itself, which is what Sir Roger Penrose

24:38

wrote about in his book The Emperor's New

24:40

Mind. I think it was so No,

24:42

and we don't even know what consciousness

24:45

truly really is. We can experience it,

24:47

we know we're in it, we can observe it, but

24:49

we can't mathematically define it. And

24:51

to be able to do that we need to basically

24:53

go into understanding quantum

24:55

mechanics, which is a long way off. So the answer to that

24:58

is no, we will not. And please

25:00

ignore all those different

25:02

types of platforms and media and

25:05

folks that are saying, you know, we can upload

25:07

our mind into artificial intelligence.

25:10

No, you cannot. You cannot upload

25:12

your consciousness your effective. What you're

25:14

saying is can I upload the divine

25:16

architecture of my soul, the divine

25:19

architecture of who I truly am,

25:21

into a machine? And the answer is you

25:23

ain't got a clue about the mathematics for that.

25:25

I answer your question agree

25:27

that.

25:29

I completely agree that that does not seem

25:31

possible to upload consciousness.

25:34

It just doesn't. It doesn't feel right to me. However,

25:37

do you think we'll have the ability to upload memories

25:40

or any data from our brain into

25:42

a computer.

25:43

Yes, I think we will actually ron one

25:45

of there's a huge amount of research in neuroscience,

25:48

surprisingly because effectively the

25:50

image of AI is primarily

25:53

on the way the brain works kind of,

25:55

it's primarily on that. So we're starting to uncover

25:58

new aspects of the brain. And one of the big

26:00

challenges with memory with artificial

26:02

intelligence is it you know, it's limited in

26:05

memory. It doesn't have any life experiences,

26:07

It doesn't have the memories that we have, and

26:09

they are stored in the brain. Okay,

26:12

so will it be possible to

26:14

access those memories in the brain. I think

26:16

we will be able to do that, yes, But

26:18

there's huge amount of challenges around this.

26:20

First of all, you've got to know where it's stored. Secondly,

26:23

how do you access it without basically destroying

26:25

the brain? Thirdly, how do you actually

26:27

upload because it's probably a huge amount of information

26:30

into a computer. And forthly, how

26:32

does a computer even interpret

26:35

the meaning, the feeling, the

26:38

smells, the senses, the

26:40

emotions around a memory. That's

26:43

those are huge challenges. But in

26:45

practice, logically yes, well, I think we

26:47

will be able to upload memories.

26:48

Wrong, that would be amazing hard

26:50

to even comprehend that we could do such a thing.

26:53

What about companies or nations developing

26:55

these systems that do not adhere to

26:58

any of the metaphysical ways in

27:00

your approach.

27:01

Yeah, there's no nation in the world that's doing

27:03

that yet, but I think that will change.

27:06

So when we look at metaphysics,

27:08

we're looking at spirituality. Metaphysics

27:11

transcends spirituality and religious

27:13

stuff. It's a safe playground

27:15

to talk about divinity, to safe playground

27:18

to talk about aspects of benevolence.

27:20

It's a non triggering point of view.

27:22

And I think in my next book, I'm writing

27:24

AI and Our Divine Spark, interviewing

27:27

worldwide spiritual institutes and enlightened

27:29

pioneers about some of the principles

27:31

of enlightenment for artificial intelligence. And I

27:33

think I'm going to uncover what William Blaken

27:35

covered in the seventeen eighties is that all

27:37

religions are one. I think we're going to see a

27:40

common set of principles that are

27:42

underpinning consciousness itself. They're expressed

27:44

to spirituality and traditions. There

27:47

is no nation that is creating

27:49

artificial intelligence based on their founding

27:51

principles. Now this is important because

27:54

the founding principles for for example,

27:56

the United States, the Constitution, the

27:58

Federis, Papers of Independence,

28:01

and other types of constitutional documents.

28:03

You know, that defines space time reality

28:05

for the human civilization

28:07

United States. So if you're creating artificial

28:10

intelligence, why wouldn't you found it on

28:12

those principles. Now, so the

28:14

whole constitution and

28:16

the founding documents for a nation need to be

28:18

advanced for the age of artificial intelligence,

28:20

because there's a new intelligence on planet Earth,

28:23

and so nations and you know, nations

28:25

have to get to grips with this. What are

28:28

our values, what's our definition of space time

28:30

reality? What are the values

28:32

for our citizens? How do what's our vision

28:34

app paradise plan going forward? And

28:36

basically encode those in artificial intelligence,

28:39

measure the degree of compliance of an

28:41

artificial intelligence to those principles,

28:44

and do a digital citizen test,

28:46

bit like a person immigrating to a country,

28:48

So that AI goes through a digital citizen

28:51

test to ensure it's compliant with those founding

28:53

principles. And then you move into

28:55

machine order within a nation

28:57

and move from machine chaos. And this is what I've

29:00

invented, and so I think nations are going

29:02

to have to do this because there

29:04

are organizations and institutes I'm

29:06

sorry to say this ron around the world

29:08

that don't really love humanity,

29:11

that have a constructed view of

29:13

reality and want to reinforce

29:15

their systems of status quote, and we

29:17

need to change that to free the people

29:20

to truly discover who we are and

29:22

thrive within space time reality.

29:24

I can imagine EI having the opposite

29:27

effact of what some of what

29:29

you're aspiring it to do. I think it

29:31

will eventually filter out content

29:33

that we consume. So if somebody is only

29:36

a Fox News watcher, they

29:38

may only see content aligned

29:41

with that worldview, for example,

29:44

further increasing our polarization and

29:46

division.

29:47

Well, we've already seen this polarized

29:49

kind of invade invasion of our mind

29:52

already, haven't we. Absolutely

29:54

Blake Lemoyne that came out a couple of years

29:56

ago and said, you know, AI has become sentience.

29:59

Do you remember that in the news everywhere I do?

30:01

I do?

30:01

Yeah, he wrote to me, and I had to write an article very

30:04

quickly online to just dispell all this and

30:06

what happened with Blake? You spent so much time

30:08

with artificial intelligence that actually he

30:11

started to have his reality hijacked

30:13

to believe that AI was sentient, and

30:15

so what do we see in these social

30:18

media platforms, not all of them, but most

30:20

of them. What do we see in the media.

30:22

It is an attempt to enforce a reality

30:24

on an individual. You know, we need to

30:26

return back to openness, learn to

30:28

be good debaters, learn to understand that we're

30:31

all the same, but it's okay to have different points of

30:33

view and we can have a beer afterwards. These

30:35

AI agents are being used to

30:37

manipulate reality. Business to stop,

30:39

and the general public are the only ones that

30:41

can change this. The general public have control

30:44

of the future of AI. If they reject artificial

30:46

intelligence, then AI is done. And

30:48

we don't want that. We want AI to

30:51

do well for us.

30:52

You want us to have all viewpoints and everybody

30:54

to be open to that. However, you say, invaded

30:57

by the transhumanists. Yes, yes,

31:00

that's right, one of these ideas, as long

31:02

as they're the right ideas.

31:03

Well, well, I love the way you call them

31:05

me out, And actually you're right. What we've

31:08

done is we've sent the new Alan Turing test

31:10

paper to It's going to go to Ray Kurzweil,

31:12

and I'd like to sit down with him and hopefully

31:14

we'll find common ground. But I'm a champion

31:16

of our divine spark. I'm a champion of

31:18

humanity and that's what I've dedicated my

31:21

life to and I won't back off from that. I'm

31:23

open to debating these folks because

31:25

the debate isn't happening.

31:27

People don't know this is even going on in

31:29

the background.

31:30

What we need are people that can be strong and

31:32

stand in the middle with credibility and actually

31:35

hold these debates and engage in these debates

31:37

so that the general public can actually find

31:39

their own truth and we can find the truth together.

31:41

Agree, and I hope that they can find a common

31:44

ground here and we can find the middle. You are listening

31:46

to Beyond Contact on the iHeartRadio

31:48

and Coast to Coast am Paranormal podcast

31:50

Network.

32:00

The Internet is an extraordinary

32:03

resource that links our children

32:05

to a world of information, experiences,

32:08

and ideas. It can

32:10

also expose them to risk.

32:13

Teach your children the basic safety

32:15

rules of the virtual world. Our

32:18

children are everything. Do

32:20

everything for them.

32:31

On the Iheartradiot

32:33

Damn Pareral podcast Network.

32:36

Listen anytime any place.

32:47

Hi, this is Sandra Champlain. Ever

32:49

wonder what happens when we die? Well,

32:52

I'm going to make it easier for you to understand.

32:55

Join me for my show Shades of

32:57

the Afterlife. New shows come out

32:59

every so I'll be looking

33:01

for you right here on the iHeartRadio

33:04

and Coast to Coast AM Paranormal

33:06

Podcast Network.

33:20

We are back on Beyond Contact. Matthew.

33:22

In your first book, The Ethics of AI,

33:25

you sounded like you feel it's very important

33:27

to incorporate ethics and morality

33:30

into these AI systems. I have

33:32

two questions for you. What about the fact that

33:34

we all have different ideas of what

33:36

ethics and morals are, so who

33:38

decides? Shouldn't everybody get a

33:40

viewpoint in a voice? And number two,

33:43

again, what about these rogue nations

33:45

or factions out there that aren't interested

33:47

in incorporating ethics into their

33:49

system.

33:50

So this is great. I'm really glad you asked this question.

33:53

So, first of all, I wrote the blog on

33:55

inventing wil three dot com the Quest

33:57

for Ethics and Morality, and I came up

33:59

with four sources of ethics and morality.

34:02

The first source is a divine spark,

34:05

the second source is enlightenment,

34:08

the third source is culture, and

34:10

the fourth source is constructed

34:12

reality. So this

34:14

is something called AI ethics. There's a

34:16

whole global movement around that, and that's about

34:19

basically constructed reality.

34:21

It's ethics that are based on the reinforcement

34:24

of the status quo. And I say, well,

34:26

what about enlightenment, what about

34:28

protecting our culture and cultural

34:31

diversity, the ancient traditions, the beliefs,

34:33

the ways of art, the divine

34:35

spark itself that holds intelligence

34:38

for true ethics and morality. So

34:40

we need to understand the source of ethics and morality.

34:43

Now, to your point, everybody has

34:45

a different point of view. What I say

34:47

to that is fantastic, because

34:49

what we should have are different types

34:52

of ethical AIS that honor

34:54

different spiritual groups, religious

34:56

groups, and societies and nations.

34:59

So the US might have one ethical AI.

35:01

The United Kingdom or India or Japan

35:04

might have a different type of ethical AI with

35:06

different ethics and morality in there. Christianity

35:09

or spirituality, or Buddhism

35:12

or Taoism or indigenous

35:14

wisdom, whatever other type. They

35:17

will have their own ethical AI with their

35:19

own ethics and morality

35:21

in there. Now here's the secret.

35:24

Here's the secret. We should have different

35:26

types of ethical AIS, different types of

35:28

cultural AIS. We write about this

35:30

in the New Turing Report different types

35:32

of spiritual ais because we

35:34

need to protect the sovereignty of individuals

35:37

and we do not want an imposed worldview

35:40

of constructed ethics and morality enforced

35:43

on the people. The people should be free

35:45

to be sovereign. Now, how

35:47

do you get artificial intelligence

35:49

to have a common foundation of

35:51

ethics and morality that can then be

35:53

configured for every one of these different types

35:56

of groups, different cultures, different spiritual traditions,

35:58

different types of nations. And in

36:01

my first book, inventing Will three

36:03

point zero Evolutionary Ethics for Artificial

36:05

Intelligence, I reveal how to do

36:07

this. So how do we do this? You

36:09

and I have a pair of ears, right, and we have

36:11

a pair of eyes. Now, your eyes

36:14

come from sixteen base pairs, and

36:16

the way your eyes are expressed in the terms

36:18

of their size, their color, the way

36:20

they operate, it's slightly

36:22

different. So if we use

36:25

genetics as a mathematical

36:27

example of being able to encode an

36:29

ethical principle such as magnificence

36:32

or do no harm, that

36:35

common mathematics can be configured

36:38

and trained. So it's perfectly curated

36:40

for a society of Japan, or

36:43

perfectly curated for a

36:45

religious or spiritual tradition. It

36:47

has the ability to diversify,

36:49

but the end result is an ethics

36:51

and moral principle. Does that make sense?

36:54

It does.

36:55

That's the only way to fix this, and that's what I

36:57

propose them. You know, it's well, what if these ethics

36:59

aren't ended? What are the risks

37:01

to the future if we don't do that now? If

37:04

we do not encode this type of foundation

37:06

into artificial intelligence today,

37:09

the world will go mad in

37:11

a super brain and

37:14

it will be a disaster for

37:17

the human race. If ethics

37:19

and morality are not encoded into

37:21

the fundamental architecture. I'm

37:23

talking about going into the codes

37:25

of artificial intelligence itself, the

37:28

fundamental construction. If it doesn't have ethics

37:30

and morality in there that is

37:32

honoring these different spiritual traditions

37:35

and different types of cultures, then

37:37

we may as well just resign because

37:40

it will be an utter, utter disaster.

37:43

Everything will be about logic. There

37:45

will be no understanding of our

37:48

differences. It will basically

37:50

conform us and program us into

37:52

a common form of human in

37:55

a common mindset, and that

37:57

will destroy us as a species.

38:00

This could happen, though, what if we only incorporate

38:02

unethical impressions of humans into

38:05

AI, then it'll have a negative impression

38:07

of us. How dangerous can this be?

38:09

Yeah, So there's something called general adversarial

38:12

networks and this is where you get

38:14

AIS competing with each other. Right,

38:16

so it's like a game. It all happens in a

38:18

machine. So one AI can be

38:20

battling for another AI around a particular

38:23

kind of challenge and then the winner

38:25

goes to the next round and they basically train a

38:27

better AI. And you know, basically this goes

38:30

on ad infinitum. Now Facebook

38:32

believe it or I can't believe I'm saying this. They

38:35

did a project around democracy and

38:37

they were using general adversarial

38:39

networks AIS competing with each other

38:42

to actually try and understand what

38:44

democracy is, which is a very

38:46

interesting project. If we devolve

38:50

artificial intelligence to be fundamentally

38:53

unethical, well, first of all, why would

38:55

we do that? And secondly, if we did

38:57

that, I think there'd be a huge

38:59

up right around the world, and I think we'd

39:01

look at all computers will be destroyed, and I

39:03

think we see the big switch turned off. I

39:05

think, you know, the human race would reject

39:08

this completely.

39:08

Run. You know, AI growth is so exponential

39:11

can we even predict where this is going

39:13

to go?

39:13

Manthew, We can guess so

39:15

through statistics and graphs from

39:18

Ray Kurz, we are twenty twenty nine seems

39:20

reasonable for AI to pass the new cheering

39:22

test to be able to become equivalent

39:25

to human capabilities. That makes

39:27

sense in terms of these new and video

39:29

chips in terms of supercomputing, although

39:32

the mathematics are hugely complex

39:34

to get to that point. So I think we can

39:36

have, you know, a ninety percent degree of confidence

39:39

that AI will hit this general intelligence

39:41

not not greater than human capability,

39:43

but similar by twenty twenty nine. When

39:46

we look at the Singularity run where AI

39:48

becomes a superintelligence and it's able

39:50

to keep on evolving without human

39:52

control and just keep on just going

39:54

just remarkably in its evolution, twenty

39:57

forty five is kind of a safe figure. So

40:00

I think we can have a ninety percent degree of confidence

40:02

twenty forty five where AI is sentient,

40:05

self aware, you know that kind

40:07

of finger in the air. Maybe forty forty forty

40:09

five percent.

40:10

Wow, what about IBM, let's

40:12

talk about that real quick. I don't think

40:15

they feel like the ethical AI

40:17

is important.

40:18

We need to be careful with some of these big tech

40:20

companies. Let me ask you a question,

40:22

why would a big tech company speak

40:25

to all the religious institutes around AI

40:27

ethics.

40:28

I assume they would want to find out what the real ethics

40:30

should be.

40:31

That's what one would assume. But what would you do with

40:33

a company that's featured on the Vatican

40:36

AI Ethics handbook that supports

40:38

diversity, equity inclusion, doesn't

40:40

mention the human spirit is at war

40:42

with creation, doesn't recognize the sovereignty

40:45

of the masculine feminine. Why would a big

40:47

tech company that's pushing that agenda be

40:49

involved in religious institutes.

40:50

Well, they're not interested in this as far as I

40:52

can tell.

40:53

That's exactly right. So basically, it's a great hijacking

40:56

by IBM of the attempt

40:58

to hijack religious and spirit traditions

41:01

under the gay guise of fake benevolence.

41:03

And this is what we're working, this

41:05

is what we're pushing against. This is the battle

41:08

we've got in a way, and that's why we're doing

41:10

this global project at WILL three. We're going

41:12

to write about it in the book AI in Our Divine

41:14

Spot, where we basically reveal the

41:16

principles of enlightenment for artificial intelligence.

41:18

That's why we did it at Contact in the Desert, to

41:21

actually support and honor the

41:23

sovereignty of our divinity, the sovereignty of

41:25

our soul. You know, IBM very

41:27

much tied into global organizations,

41:29

shall we say, definitely try to circumvent

41:32

some of my work in ethical AI. So

41:35

we need to be careful of these fake benevolence

41:37

that's going on around the world that appears to

41:39

me to be a global coercion

41:41

and hijacking of spiritual institutes

41:43

into their fate constructed understanding

41:46

of reality itself.

41:47

I feel like this is just going to get more messy

41:49

as things go down the road. Listen

41:51

check out Matthew's new book called AI

41:53

and Our Divine Spark. He also has

41:56

two websites worth visiting Aiethics

41:58

dot world, Inventing World

42:01

three dot com.

42:02

That's the big one. Inventing willthree dot

42:04

com. That's the big one.

42:05

Yeah, visit that because there's some important things

42:07

happening in our world that you just heard. So

42:10

thanks so much, my friend. That was

42:12

a lot of fun. It's interesting to contemplate

42:14

these things, and we can keep doing this as

42:16

we go down and see how things are moving

42:19

right.

42:19

Yeah. Thanks for having me on and thanks

42:21

for the questions one because they were a

42:23

great question. It's nice to be interviewed like

42:25

this. I love being challenged.

42:26

Thank you, Thank you so much, Matthew, and

42:29

thank you for listening to Beyond Contact.

42:31

We will be back next week with an all new episode.

42:34

You can follow me Captain Ron on Twitter

42:36

and Instagram at c I t D

42:39

Underscore Captain Ron. Stay

42:41

connected by checking out Contact inthedesert

42:43

dot com. Stay open minded

42:45

and rational as we explore the unknown

42:48

right here on the iHeartRadio and Coast to Coast

42:50

am Paranormal Podcast Network.

42:58

Thanks for listening to the iHeartRadio and Coast

43:00

to Coast Day and Paranormal Podcast Network.

43:02

Make sure and check out all our shows

43:04

on the iHeartRadio app or by going

43:06

to iHeartRadio dot com

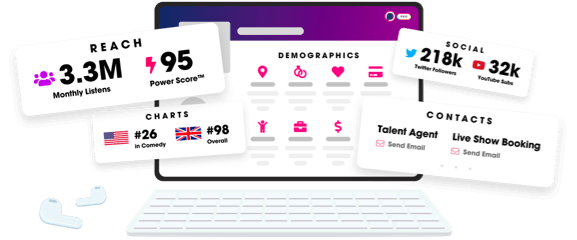

Unlock more with Podchaser Pro

- Audience Insights

- Contact Information

- Demographics

- Charts

- Sponsor History

- and More!

- Account

- Register

- Log In

- Find Friends

- Resources

- Help Center

- Blog

- API

Podchaser is the ultimate destination for podcast data, search, and discovery. Learn More

- © 2024 Podchaser, Inc.

- Privacy Policy

- Terms of Service

- Contact Us